Credits: Alex, Arsalan Khan, Dan Hopkins, Eddie Heironimus and Uzair Khan

1. EXECUTIVE SUMMARY

This report provides the Chief Information Office (CIO) of Citadel Plastics (CP) – a fictional organization – recommendations and justifications that would help her make procurement decision on selecting a Voice over Internet Protocol (VoIP) solution. In this paper, we analyze the business and technology issues faced by the organization. Our team performs this analysis by identifying the current issues with the telecommunications environment across various worldwide locations and the future needs of CP. For this report, we have made the following assumptions:

|

General Assumptions

|

Business Assumptions |

Technology Assumptions

|

- Final decision is with the CIO to choose the VOIP solution

- Various vendor business applications are flexible to connect with any other system

|

- The sales offices have high-speed broadband connection while the remote sites do not

- Each sales office has 15-20 users

- Each manufacturing sure has 300-400 users but only a handful would be receiving CAD models

|

- File Transfer Protocol (FTP) is used to exchange CAD models between engineering team in the sales office and manufacturing sites

- CAD models are between 100MB to 300MB

- Currently the mobile computing options are limited

|

Table 1: VoIP Solution Assumptions

Based on the above assumption and keeping in mind the future growth of CP, our team recommends the following two options to be considered for the purchase of a VoIP solution:

|

Benefits |

Risks |

Costs

|

| Option # 1

(Cloud) |

- Easy to set up and maintain

- Simple plug and play functionality

- Low Cost

- Full featured functionality

|

- No Quality of Service (QoS) on Internet traffic to cloud provider

- Risk of provider outage (both technical and operational)

- Lack of control over technical solution

- Privacy/Security: exposure of call data to Cloud provider.

- · Updates/changes to the cloud would impact our deployment

|

- $24.99 per user per month for Standard account

- $34.99 per user per month for Premium (for Salesforce.com integration)

- $44.99 per user per month (10,000 toll free minutes) for Enterprise

|

| Option # 2

(On-premise) |

|

|

|

Table 2: VoIP Solution Options

While both options have pros and cons, our team has determined that due to reliability considerations, on-premise VoIP solution is a better choice. We have assessed that even though in the short-term the on-premise VoIP solution is more expensive but in the long-term it would prove to be practical.

2. PROBLEM STATEMENT

The decision to deploy a VoIP solution can be a large hurdle for Citadel Plastics, especially for end-users that are habituated to our legacy systems of corporate communication. Aside from the difficulties involved in breaking the habit, old systems such as Public Switched Telephone Network (PSTN) and Plain Old Telephone Service (POTS) have a proven track record of being stable over a long time. Regardless, these systems should be labeled as outdated technologies that are no longer applicable to the business growth that we are experiencing. Given our increasing dependency to exchange data between our manufacturing sites and sales offices, it is imperative that we switch to a solution that increases our broadband capacity. Transitioning to a VoIP solution seems to be the dominant alternative, but our main analysis will be to determine which vendor is better suited to satisfy our business needs; consideration on how the transfer of CAD files is now as important as the point of sale in CP business model.

General assumptions:

- There are certainly many VoIP solutions in the market place we could cover but we will limit the scope to the best two in our report. The decision to pick one over the other is really a subjective one for the CIO as they all offer rather comprehensive feature support.

- All of the solutions we consider can interconnect with a large number of different interfaces, terminals and gateways depending on the requirements of a specific deployment, thus allowing a large amount of flexibility in business applications.

3. REQUIREMENTS

Our aim is to procure a solution that can 1)offer cost-effective and seamless communication to all our users, regardless of their role within CP, 2)have the ability to merge disparate technologies such as mobile platforms and web-aware business applications and 3)not simply enable efficiency by voice and data integration but leverage telephony implementations across our manufacturing and sales force. The following table shows CP’s different sales and manufacturing locations:

| Sales Offices (15-20 people) |

Manufacturing Sites (400 people)

|

|

Europe

|

Asia |

North America |

South America

|

|

- Dublin, Ireland

- Frankfurt, Germany

- London, UK

- Madrid, Spain

- Milan, Italy

|

- Beijing, China

- Tokyo, Japan

- Bombay, India

- Islamabad, Pakistan

- Moscow, Russia

|

- Mexico City, Mexico

- Ottawa, Canada

- Washington, DC

|

- Brasilia, Brazil

- Bogotá, Colombia

- Santiago, Chile

- Pretoria, South Africa

|

- Haryana, India

- Chandigarh, India

- Dongguan, China

- Guangdong, China

- Tampa, Florida

|

Table 3: Citadel Plastics’ Locations

3.1 Technology Overview (current)

CP has a global presence with two types of offices around the world. The sales offices are located in major cities with access to high-speed Internet connections. The three manufacturing facilities are located in remote parts of the world with limited access to high-bandwidth. Currently the sales offices share their CAD files using FTP servers. There is no formal process in place and with the recent growth in business there have been a lot of file transfer delays.

Business Assumptions:

Based on the information, we have made the following assumptions:

Transfer Route

- The sales offices receive sales orders from customers via phone and the web.

- The engineering team creates the CAD files (100MB – 300MB) at the sales offices.

- Sales then sends CAD file to manufacturing site via an FTP server.

- Manufacturing site downloads the CAD file and builds the product.

WAN Connections

- The sales offices have T1 connections, 1.5 Mbps down and 1.5 Mbps upload speeds.

- The manufacturing sites have satellite connections with speeds from 1.5 Mbps download and 128 Kbps upload (which often experience delays).

Mobile Computing Options:

Every CP user operates onsite with limited mobile computing options. There are two shared stand-alone laptops at each sales site. These laptops are used by the sales staff for rare client-site meetings. Manufacturing facilities do not have any laptops onsite. Additionally, no mobile phones are provided to the users.

Interoperability/Integration:

Voice and data integration is a critical part of the network design. CP has many internal employees that work at different locations around the globe. These users need to be able to quickly and easily communicate, collaborate and share their data. External customers need to be able to submit orders and discuss any issues via phone or email. However, the current design does not utilize current integration and automation technologies. Initially this was not a problem but with the recent growth in business, all members are experiencing issues. These issues range from transfer delays and voice quality issues when dealing with customers and vendors.

There are many application silos that have been created over time and have not been designed to share information easily. The three key types of information at CP are sales orders, email and CAD files. Sales orders are received via email or phone. There is a dedicated mail server at the Washington, DC location that handles email for the entire organization. The mail server is running Microsoft Exchange 5.0 on Windows Server 2003. Generally the mail flow is fine as long as Internet and power is available. However, the hardware is out of support and outdated. Additionally, there is a dedicated Internet connection at every location to the outside world.

For FTP transfers, users have access to a dedicated workstation with a dedicated layer 2 private line. This setup is installed at each location. Both the sales team and the engineering team complain about delays during FTP transfers. The delays are being caused by multiple factors. The lack of a queue causes the download links to receive numerous downloads at the same time. Most of the manufacturing facilities have lower connection speeds and cannot handle that load all at once. This causes frustration between the manufacturing and sales departments.

The voice network is entirely copper-based. Each site has a dedicated PBX with PRI lines that go out to the PSTN. The offices are using TDM phones with copper lines between the phone and the PBX. Though this is a traditional design, the phone company provides data, voice and power over the copper lines. This allows the phones to continue to run even when the local power company loses power. However, customers often complain about noise during phone calls and fast-busy signals and often resort to using their personal cell-phones.

Network Topologies:

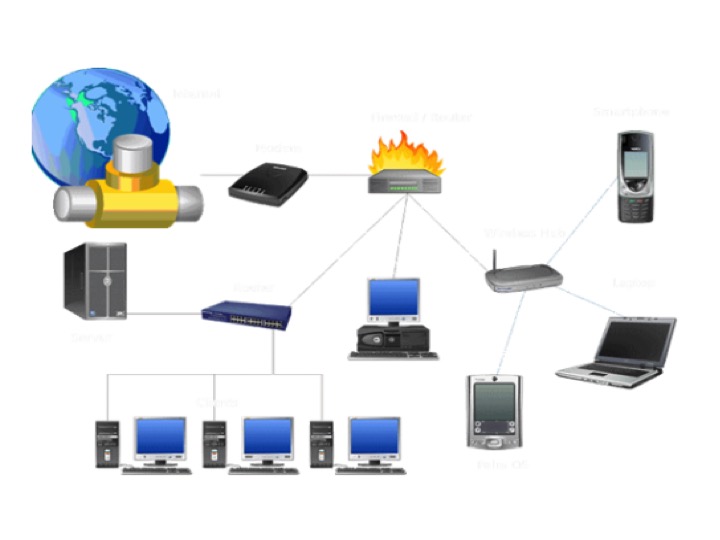

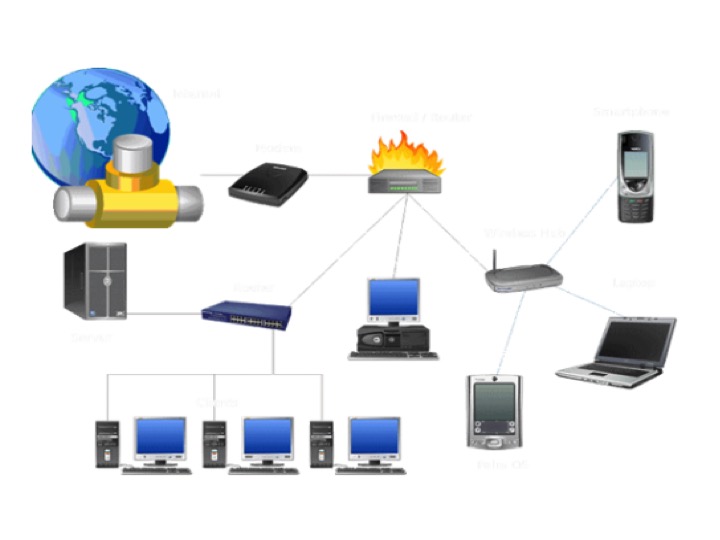

The current network topology is shown here below:

Figure 1: Current Network Diagram

This topology is used at both the sales offices and the manufacturing facilities. The diagram shows a dedicated uplink/downlink to the Internet. The speeds of this link vary between the sales offices and the manufacturing facilities. However the topology remains the same.

The router is sent to a firewall, which is the only layer of protection from the outside world. Currently they are running a Juniper firewall with the default settings. There are no custom configurations on the firewall. The site is prone to attacks that cause some of the Internet outages at the sites.

The firewall has a dedicated switch, wireless access point, mail server and an FTP terminal for file transfers. Wireless access point has been turned off to help lower the load on the bandwidth.

As mentioned earlier, the voice communication is currently configured over copper. All the TDM phones at each site connect to a router that is directly connected to a PBX. The PRI provider installs and manages the equipment and the call routing. This consumes a lot of power. During peak business hours, customers complain about static and voice degradation. The following figure shows the current voice communication setup:

Figure 2: Current Voice Communication Setup

Network Usage:

The T1 line at each sales office is over utilized. Users complain about transfer rates and slow Internet access during peak business hours. This causes a delay when building orders for customers over the phone. In addition, each sales office sends 10 orders (CAD drawings) to the manufacturing facilities per day. These files are uploaded via a T1 connection then downloaded from the manufacturing facility through a satellite connection.

Customers often complain about static and noise when calls are made from the office phones. This causes users to have to use their own personal cell-phones to make phone calls. After further investigation, the leading cause of the noise is due to the limited number of lines on the PTSN.

The manufacturing sites receive calls from the sales offices but are only able to make two outside calls at any time. The users have managed to make this work but often calls are missed and sales offices have to wait until next day to get their orders in.

Security:

CP utilizes a Juniper firewall in their current environment. All workstations are equipped with stand-alone instances of Symantec Antivirus. There were no managed instances of AV clients on the entire network. Local machines are configured with Windows Firewall but since all users have admin privileges, users often turn them off.

Security updates are pushed out manually and rarely ever verified. The vulnerability scanner reported 600+ security updates across the entire network. The doors to do the network closets are often kept open to help with ventilation. This is a liability as it allows easy access to the organization’s critical IT services.

Implementation:

The current implementation plan was not documented. Current managers of CP suggest that a couple of hardware guys that were not experts in network design did the implementation.

3.2 Technology Overview (future)

Regardless of which vendor we decide to procure for our VoIP solution, we need to acknowledge the variety of caveats inherent from a VoIP solution and define the scope as much as possible. What application? What platform? What protocols? We know VoIP is a broad term, describing many different types of applications installed on a wide variety of platforms using a wide variety of both proprietary and open protocols that depend heavily on your preexisting data network’s infrastructure and services. Therefore, we need to narrow the future technological overview of the VoIP solution we want to explore.

Because VoIP technology, as opposed to POTS, interacts with the Internet and can be configured in various types of network topographies, it is therefore very susceptible to unwanted attacks. According to David Persky, the evolution of VoIP is rid with vulnerabilities because “the security aspect was an afterthought and as such, there has been this seemingly endless game of cat and mouse between security engineers and vendors fixing vulnerabilities.” Therefore we have to make sure that the future solution CP engages in considers the following preventive measures: 1) promotion of greater log analysis to provide a clearer vision of voice and data traffic, 2) implementation of regular 3rdparty VoIP penetration testing tools such as Nessus, 3) segmentation of data and VoIP traffic in separate Virtual Local Area Networks (VLANs) to ensure that the VoIP VLANs cannot be used to gain access to other data VLANS, and vice versa, 4) creation of firewalls to block all outbound traffic for known destination VoIP service ports, and 5) avoid a single line of failure by not putting the IPS inline with the VoIP traffic.

Some of the main vulnerabilities we will reduce from these measures are denial of service attacks (DOS), man-in-the-middle attacks, call flooding, eavesdropping, VoIP fuzzing, signaling, audio manipulation, SPIT or voice SPAM and Voice phishing attacks. When comparing these vulnerabilities with those from POTS, they share most of the vulnerabilities except the ones involving the web interface. Unlike our old POTS system, when you know a line is vulnerable when you are actually operating the telephone line, VoIP can be exposed to the previous vulnerabilities even when the line or device is inactive. Since VoIP integrates voice and data on the computer, it is possible to hack into the VoIP if the computer it’s connected to is online. This is accomplished because most users “overlook the fact that the VoIP phone can possess a web management Graphical User Interface (GUI), and can be compromised to then attack other VoIP and data resources, without placing any calls.” Still there are vulnerabilities in POTS that are also present in VoIP, these are Caller ID spoofing and VoIP toll fraud or phreaking.

Aside from sharing vulnerabilities, POTS and VoIP also share particular legislation that is applicable to both technologies. The two main pieces of legislation that the new solutions we adopt must comply with 1) the Communications Assistance for Law Enforcement Act (CALEA), which require carriers and Internet Telephone Service Providers (ITSPs) to have a procedure and technology in place for intercepting calls and 2) the Truth in Caller ID Act of 2007, which makes it unlawful for any person in the US to cause any caller identification service to transmit misleading or inaccurate information with the intent to defraud or cause harm. Based on these overall technological considerations, we can proceed to analyze our recommendations.

4. RECOMMEDATION # 1: Cloud VoIP Solution

The first solution we are recommending for consideration is a hosted PBX or “Cloud” based phone solution. There are a number of vendors that offer hosted PBX solutions that would enable a cost effective and simple VoIP solution, while also providing cutting edge technical features and functionality.

A hosted cloud provider would primarily offer CP the following benefits:

- No hardware: Beyond core network routers and switches, No PBX or other VoIP equipment would be necessary for the solution. This would reduce the Capital Expenditure requirements and implementation costs that buying an “in-house” VoIP solution would provide.

- Ease of deployment: the initial and subsequent deployment of physical phones is effortless with a cloud solution. CP can simply plug in a phone into the network and the phone uses DHCP to automatically configure itself for the network.

- Web based administration: A cloud-based solution is controlled by a web administration portal that allows for web based provisioning and administration from any Internet accessible computer.

- Full features and functionality: Most cloud solutions have cutting edge features and functions such as voice mail to email, automatic presence (availability) detection, etc. Additionally, as the company improves their offering or provides additional features, CP would be able to leverage these.

- End Point Options: Most cloud providers offer “soft” phones in addition to physical phones that can be installed on a computer or smart phone device giving a user many different options for making and receiving calls.

- CRM Integration:Somecloud providers would give CP the ability to seamlessly log calls into certain CRM solutions (like Salesforce.com) to provide for enhanced process efficiencies, tracking and reporting.

A cloud based VoIP solution however does pose some risks and challenges for CP. Primarily, these risks relate to call quality and outages. Since all calls have to route through the cloud provider, without a dedicated Multiprotocol Label Switching (MPLS) connection to the selected cloud provider, calls would route over the public Internet and there are no QoS guarantees outside of CP controlled networks. Additionally, any outage impacting the cloud provider would inherently impact CP so proper and thorough due-diligence is needed during vendor selection.

4.1 Cloud VoIP Solution Project Implementation Plan

A cloud based VOIP solution really reduces the complexity of a VOIP implementation for CP and is a primary compelling driver of such an alternative. First, CP would want to estimate the number of calls and current calls it expects to use through the VOIP system. This data would drive plan selection (international calling plans) and ensure the proper connectivity to each location for supporting such a solution.

Second, CP would complete a technical assessment of internal network architecture. For example, they would need to ensure that all core switches and routers allow for QoS, that the necessary firewall ports are open to allow for the UDP traffic of the phone vendor, and ensure that Internet connectivity to each site can support the VOIP traffic. Most cloud vendors suggest an average of at least 64Kbps per call (up/down), which can then be multiplied by the number of expected concurrent calls to create a baseline minimum connectivity standard.

After the planning stage of the implementation is complete, CP could leverage the Cloud providers web based control panel to set up each extension, VM, user etc. for each phone that it will deploy (don’t need to configure the physical phone itself).

When the phones arrive onsite to the user, the user can simply plug the phone into the network and it will automatically configure itself with a DHCP issued device and contact the Cloud providers website to download it’s assigned profile. This will reduce the need for IT staff to physically support the VoIP rollout at each location, saving CP additional implementation funds.

4.2 Cloud VoIP Solution Disaster Recovery

In the event of a Disaster, a cloud solution provides CP with a number of options.

Since the cloud VoIP solution is offsite, it is inherently removed from any disasters that impact the continuity of CP directly, as access to the phone system requires only an acceptable Internet connection. Should an event occur that impacts CP operations in any way, calls to CP would still occur since they route through the cloud providers network. Since most cloud providers allow for roll over functionality to mobile phones, calls could still route to the intended recipient or at worse case, go to voice mail.

Additionally, most cloud providers have “soft phones” that enable calls to be made and received – using the same number/extension — from software installed on their computer or smart phone device. So in an event of a disaster, we would develop a number of procedures that accommodate ongoing use of the cloud phone system in a variety of different ways, assuming a user has any acceptable Internet connection.

While a cloud solution would inherently offset most of the technical disaster recovery needs, it would expose CP to the disaster recovery solution of the provider. Therefore, when we recommend a specific vendor, we will ensure proper due-diligence is undertaken on the cloud vendors strategy, process and procedures.

4.3 Cloud VoIP Solution Failover Remediation

From a technical perspective, a cloud solution means that CP simply has to consider redundancy in its local area networks and Internet connections, as we would offset the technical failover mechanisms to the chosen cloud provider. In this sense, we can use the redundancy we’ve already built into the existing LAN and WAN to hedge against issues getting to the cloud phone provider.

In the event a failure occurs to the network or Internet, CP phones systems would technically not go down because all calls route through the cloud provider. As mentioned in the Disaster Recovery section, calls could automatically reroute to mobile devices or “soft” phones to reach the intended recipient or extension.

However, since CP effectively would be outsourcing their VoIP solution, CP would also outsource the failover remediation to the selected cloud provider and would be exposed to any outage that the Cloud provider may have. Industry leading Cloud providers have failover remediation solutions and processes internally which would be more redundant and resilient than what CP could likely afford, however outages do occur and we would be beholden to the cloud provider for resolution if a system outage were to occur.

4.4 Cloud VoIP Solution Vendors, Price, SLAs and Value

There are a growing number of cloud based / hosted PBX options available for CP to choose from – most of which cater to the mid-market. Industry leaders include RingCentral.com, Comcast, Verizon, XO communications, Vonage (business solutions), 8×8 and Grasshopper.com.

Most cloud solutions offer their service at a per-month; per-user rate and prices can range from about $19.99 – $49.99 per month, which typically includes unlimited minutes, and some allocation of international long distance minutes.

Unfortunately, Service Level Agreements (SLA) are typically not offered for any solutions that require communication to the providers through the public Internet, as QoS cannot be ensured. However, if CP can enable a MPLS network to a selected cloud provider, typically cloud providers will negotiate SLA’s to provide some guarantees.

5. RECOMMEDATION # 2: On-Premise VoIP Solution

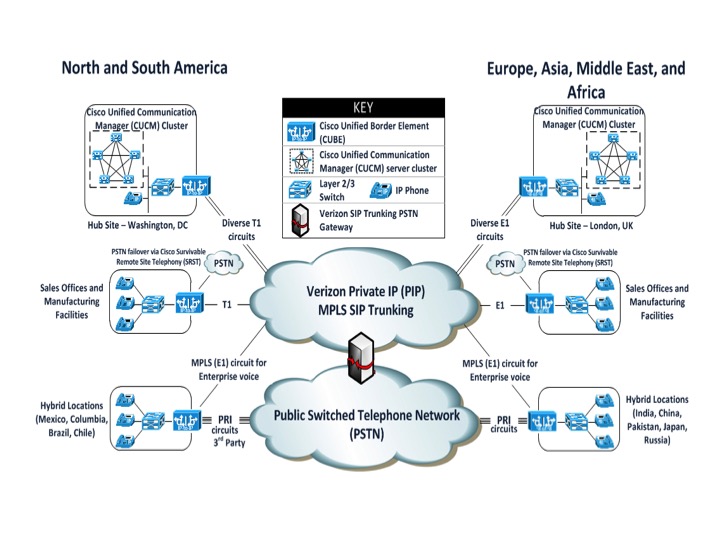

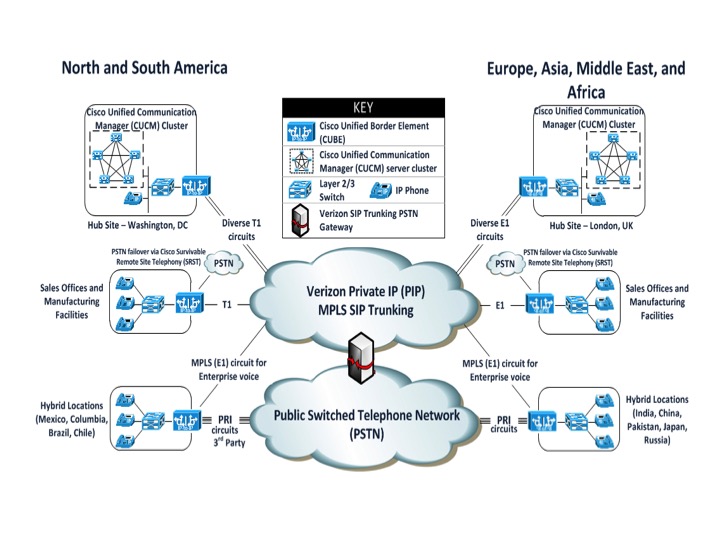

Verizon was chosen as the network provider due to its substantial global footprint for both MPLS and SIP Trunking connectivity. Verizon also receives high marks for its Voice over IP Service portfolio from industry critics such as Gartner. Verizon provides full VoIP services (i.e., local, long distance, and international) to North America and most of Europe. Countries that are not covered by full VoIP services will utilize a hybrid approach that employs 3rd party voice services to fill in the gaps in services. In all cases, MPLS connectivity will allow each country to realize cost savings by directing intra-company calls across the MPLS network.

In the site listing below, the sites in Red have full VoIP services from Verizon. For the blue sites, Verizon is able to provide international and intra-company VoIP services. The customer will need to order local services via ISDN PRI or some other PSTN connectivity via a third party provider. The purple sites have MPLS connectivity only. The customer will need to order local, long distance, and international service via a third party provider. The customer’s dial plan will be configured such that intra-company calls will be sent over the MPLS connection directly to the called site allowing them to still realize cost savings through bypassing the tolls for those international calls.

5.1 On-Premise VoIP Solution Project Implementation Plan (for hub sites)

The CP will employ a Cisco VoIP solution for call processing utilizing the Cisco Unified Communications Manager (CUCM) to support a multi-site, distributed call processing deployment with a group of call processing servers operating in a cluster to form a single logical call processing server. Two hub sites will provide call signaling and application services to the network. A hub site in Washington, DC will provide direct support to the locations within North and South America while another hub site in London, UK will support the locations within Europe, Asia, the Middle East, and Africa. Both the Washington, DC and London, UK hubs will have a CUCM Publisher server for CUCM configuration and two additional Subscriber servers for primary and backup call signaling and application services. Cisco Unified Border Element (CUBE) routers will provide the Session Border Control (SBC) functionality between the CP and the Verizon SIP Trunking network provided over MPLS dedicated circuits. The following figure shows the different offices that would use this solution:

Figure 3: On-Premise VoIP Solution Locations

5.2 On-Premise VoIP Solution Disaster Recovery (for remote sites)

The remote Sales and Manufacturing offices will also have CUBE routers to terminate their Verizon SIP Trunking connections. The routers will utilize Cisco’s Survivable Remote Site Telephony (SRST) feature that automatically detect the loss of call processing to the hub site’s CUCM and auto-configures the router to provide local call processing to the IP phones while network connectivity is restored either locally or to the hub site. Each remote site will also be configured with two onboard Foreign-Exchange-Office (FXO) interfaces for Plain Old Telephone Service (PSTN) lines to allow for emergency outbound dialing such as 911. The router will automatically redirect outbound calls to the FXO interfaces until connectivity is restored to the hub CUCM servers at which time any new calls will again be sent over the WAN link.

For countries that have limited or partial SIP Trunking service with Verizon a hybrid approach is required whereby the customer procures PSTN service via a 3rd party local service and routes either or both intra-company and international voice calls across Verizon’s MPLS SIP Trunking network.

5.3 On-Premise Solution Failover Remediation (for networking size)

To conserve bandwidth the CP will utilize the compressed G.729a codec that requires 33kbps per call compared to the G.711 codec that requires 83 kbps per call. Verizon’s SLA includes a MOS score of 4.0 for G.729a traffic which supports high quality voice.

The sales offices and major locations all have 15-20 people. The network was sized to support concurrent calls for half the users at any given site. 10 calls multiplied by 33 kbps equals 330 kbps of required bandwidth per site resulting in a fractional T1 or E1 circuit with room to grow. Although the manufacturing sites have large numbers of employees, very few of them will have their own phone or actually spend much time on the phone so the concurrent call requirements will be very similar to the sales offices and major locations. For resiliency, the Hub sites will have two diversely routed T1 / E1 circuits. This will allow the hubs to have alternate network paths for their own SIP Trunking connectivity and phone service as well as providing backup paths for the remote sites that depend on the hubs for their signaling and call control.

5.4 On-Premise VoIP Solution Network Changes and Design

For the initial VoIP rollout, the CP will be converging voice and data on the LAN network. The new MPLS connections will be dedicated solely to Voice traffic. A separate WAN network is already in place for data traffic. To support voice and data convergence on the LAN, QoS configurations will be implemented to prioritize time sensitive voice traffic over data traffic. QoS will also be configured on the WAN network to prioritize voice traffic across the MPLS backbone. CP would use its LAN convergence experience as a stepping-stone to eventual full convergence over both the LAN and WAN.

Figure 4: On-Premise VoIP Solution Network Design

6. CONCLUSION

To summarize, the two VoIP solutions consolidate the voice and data networks of CP in order to provide more bandwidth for the exchange of the CAD models. The benefit of looking at two solutions is to see the choices that are available to us. This analysis also indicates that with any solution that we proceed with we have to take into consideration risks that revolve around people, processes and technologies. These risks include change management, circumventing of the new processes, obsoleteness of the technologies and the vendors going out of business. Taking into account all these risks and the long-term benefits for the organization, we recommend the On-premise VoIP solution for Citadel Plastics.

Processing…

Success! You're on the list.

Whoops! There was an error and we couldn't process your subscription. Please reload the page and try again.

You must be logged in to post a comment.